- SUNET

- | För tekniker

- | Tekniskt arkiv

- | Internet Land speed record

- | LSR speed record 14 april 2004

SUNET Internet2 Land Speed Record: 69.073 Pbmps

Swedish University Network

Börje Josefsson 2004-04-14

SUNET is the organization for the national higher research and education network (NREN) of Sweden. SUNET operates the GigaSunet network, which is built with 10 Gbit/sec DWDM connections in a redundant infrastructure, connecting PoPs in 22 cities, nationwide, and using redundant 2,5 Gbit/sec connections as access towards the universities. It is used by researchers, teachers, students, and administrative personnel on 32 universities and colleges nationwide. In addition to this, some central government museums and external organizations are also connected to the network.

Internet Land Speed Record:

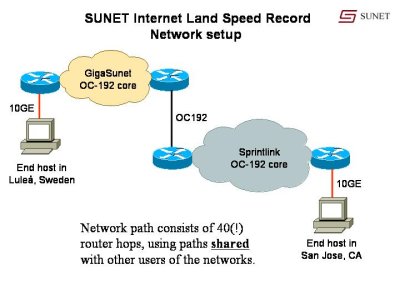

On April 14, 2004, SUNET transferred around 840 Gigabytes of data in less than 30 minutes, using a single TCP stream between one host at the Luleå University of Technology (LTU) in Sweden (close to the Arctic circle), and one host connected to a Sprint PoP in San Jose, CA, USA. The network path used is the GigaSunet backbone - shared with other users of the Swedish universites, and the SprintLink core network, used by all the customers of Sprintlink.

The transfer was done with the ttcp program, available for many different platforms. We have chosen to use NetBSD for our tests, due to the scalability of the TCP code. ttcp is usually included in Unix and Linux systems. Windows users can download a Win32 version from pcusa.

Network setup:

Network path:

The path spans across two continents, Europe and the US, as shown in this picture:

Results:

According to the Internet2 LSR contest rule #5A, IPv4 TCP single stream, we acheived the following results, using a publically available snapshot of the upcoming version of the 2.0 version of the NetBSD operating system, and using a MTU of 4470 bytes:

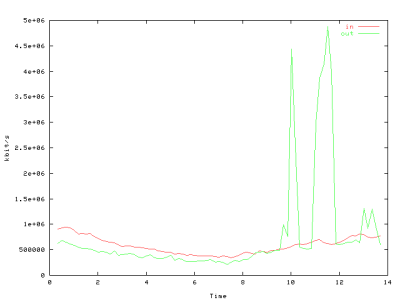

838860800000 bytes in 1588 real seconds = 4226 Mbit/sec

The complete output from ttcp during the transmission as seen from the transmitter and the receiver. The test run lasted 1588 seconds (26 minutes, 28 seconds).

A tcpdump output is available for the first 20MB of a transmission, both in raw tcpdump format and as readable tcpdump output.

Internet2 Land speed record submission:

According to contest rule #7, the distance should be calculated as the terrestrial distance between the cities where we do router hops. Referring to the Great Circle Mapper, the distance is 16,343 km (10,157 miles). We have then used the airport of the city in question as it's location.

Record submitted for the IPv4 single stream class is 69.073 Petabit-meters/second (which is a 12% increase of the previous record).

Compared to the previous record, we can note that we acheived this, using less powerful end hosts, with 150% longer distance, and we used only about half the MTU size (which generates heavier CPU-load on the end-hosts). Most notable is perhaps that our result was acheived on the normal GigaSunet and Sprintlink production infrastructures, shared by millions of other users of those networks.

End system hardware and configuration:

The end hosts are off-the-shelf Dell 2650 servers, each with only a single Intel Xeon 2.0 GHz processor, 512 Mbyte of RAM and using the Intel PRO/10GbE LR network adapters.

NetBSD operating system configuration (apart from default configuration, available in the snapshots as of April 2004):

Kernel compile-time parameters:

- options MCLSHIFT=12 # Increase MBUF-cluster size to 4k.

- options NMBCLUSTERS=65536 # Increase number of buffers.

- dge* at pci? dev ? function ? # Intel PRO/10GbE network adapter

- net.inet.tcp.init_win=30000 # Tune TCP startup time

- kern.sbmax=300000000 # Max memory a socket can use, 300MB

- kern.somaxkva=300000000 # Max memory for all sockets togeather, 300MB

- net.inet.tcp.sendspace=150000000 # Size if transmit window, 150MB

- net.inet.tcp.recvspace=150000000 # Size of receive window, 150MB

- net.inet.ip.ifq.maxlen=20000 # Max length of interface queue

Ifconfig settings:

ifconfig dge0 10.0.0.1/30 ip4csum tcp4csum udp4csum link0 link1 mtu 4470 up

- ip4csum, tcp4csum, udp4csum # Enable hardware checksums

- link0, link1 # Set PCI-X burst size to 4k.

Observation:

We noted that it is the PC hardware (excluding the Intel PRO/10GbE network adapter) that is the limiting factor in our setup. The operating system, the network adapter, as well as the network itself, including the routers, are capable of handling more traffic than this, but the PCI-X bus and the memory bandwith in the end hosts are currently the bottlenecks.

Summary, according to Internet2 standards:

14 April 2004

- Record Set: IPv4 Single Stream

- 2-LSR Record: 69.073 petabit-meters/second

- Team Members

Sprint

- Network Distance: 16,343 kilometers

- Data transferred: 838.86 Gigabytes (838860800000 bytes)

- Time: 1588 seconds

- Software notes:

application: ttcp

- Hardware notes:

Network interfaces: Intel® PRO/10GbE LR

Special thanks to:

Peter Löthberg for ideas, help, debugging, US coordination etc. etc. His help has been invaluable!

Thanks to:

The Sprint staff in San Jose, Reston and Stockholm, for outstanding support and help.

Matt Baker and Travis Vigil of Intel, for help with the Intel network adaptors.

TeliaSonera in Sweden, for lending us the fibers for the local tail in Luleå.

The staff at the Computer Centre at LTU, for their support and good discussions.

Also special thanks to Sprint for providing bandwidth, and for facilities and housing for the host in San Jose!

Contact information:

Hans Wallberg CEO, SUNET Hans.Wallberg@sunet.se

Börje Josefsson CTO, SUNET. LSR test coordniator bj@sunet.se

Peter Löthberg Sprintlink LSR coordinator roll@sprint.net

Anders Magnusson LSR technical test manager. ragge@ltu.se